You sent 14 interactive demos last quarter. Your CRM says three of those deals closed. But when leadership asks which demos influenced which deals, you're guessing.

This is the core disconnect. Demo engagement data (views, clicks, completion rates) lives in your demo platform. Pipeline and revenue data lives in your CRM. Nobody connects them. You have anecdotes, not attribution.

The problem gets worse as deals get more complex. According to Gartner, B2B buying committees now average 6 to 10 stakeholders. That means multiple people are viewing your demos across multiple sessions, and you're tracking none of it at the opportunity level.

This article gives you a practical system for demo ROI tracking: connecting what happens inside your demos to what happens inside your pipeline. You'll walk away with a step-by-step process, specific benchmarks, and a monthly optimization framework that no other guide on this topic covers.

What's inside

This guide covers the full implementation path for tracking demo ROI, from defining your demo types and setting up CRM integration to building attribution models and running monthly optimization cycles. It's written for AEs, sales enablement leads, and RevOps analysts who need to prove that demos influence pipeline and revenue, not just generate engagement metrics.

Every section includes specific outputs you should produce before moving forward. Treat it as a project plan, not just a read.

TL;DR

- Demo ROI tracking connects demo engagement metrics (completion rates, step interactions, time spent) to pipeline outcomes (demo-to-opportunity conversion, revenue influenced, sales cycle impact).

- Most teams fail because they track engagement and pipeline in separate systems with no attribution layer between them.

- The fix starts with CRM integration: syncing demo engagement events to opportunity records so every interaction is tied to a deal.

- Three metrics matter most for AEs: demo-to-meeting conversion, stakeholder engagement depth, and revenue influenced per demo.

- Monthly optimization (reviewing drop-off points, A/B testing flows, updating content) separates teams that improve from teams that just report.

- Platforms like Guideflow sync session-level engagement data directly to CRM records, closing the gap between demo activity and pipeline attribution.

What is demo ROI tracking

Demo ROI tracking is the practice of measuring how product demos (interactive, sandbox, live, or recorded) contribute to pipeline generation, deal progression, and closed revenue. It connects what prospects do inside a demo to what happens inside your sales process.

This is different from demo analytics. Demo analytics tells you that 200 people viewed your demo last month and 65% completed it. Demo ROI tracking tells you that 14 of those viewers became opportunities, 3 became closed-won deals, and the average deal that included a demo view closed 11 days faster than deals without one.

Here's a concrete example. An AE sends a personalized interactive demo to a VP of Ops at a mid-market account. The VP completes 85% of the demo, spending 4 minutes on the integration setup steps. Two days later, the CFO opens the same demo link and views the pricing section. Within 48 hours of the CFO's view, the deal moves from Stage 2 to Stage 3.

Demo ROI tracking captures all of that. Demo analytics captures only the first part.

Why most teams get it wrong

The engagement-to-revenue gap is the root cause. Teams collect demo data (views, completion, clicks) but it stays siloed in the demo platform dashboard. Meanwhile, CRM records don't automatically reflect demo interactions, so pipeline reports can't credit demos for anything.

Then there's the "last touch" trap. Most last-touch attribution defaults give credit to the final meeting before a deal closes. The demo that warmed the buying committee three weeks earlier gets zero credit, even though it's the reason the CFO agreed to the evaluation call.

The result: AEs know demos help close deals. They can feel it. But they can't prove it with data, and leadership won't fund what can't be measured.

Demo analytics vs. demo ROI tracking

Key principles of effective demo ROI tracking

1: Attribution starts at the CRM, not the demo platform

Your demo platform captures engagement. Your CRM captures pipeline. If the two aren't connected at the opportunity level, you're building reports on incomplete data.

Application: Before launching any demo, confirm that your demo platform syncs engagement events (views, completions, specific steps clicked) to the CRM contact record and associated opportunity. In Salesforce, this typically means custom fields or activity logging on the opportunity object. In HubSpot, it means mapped contact properties and deal associations. Test the sync with one live demo send before scaling.

2: Measure the signal, not the noise

Not every demo engagement metric matters equally. For an AE, the metrics that predict deal outcomes are more valuable than the metrics that describe content performance.

Application: Prioritize three tiers of metrics. Tier 1 (revenue metrics): demo-influenced pipeline, demo-to-closed-won conversion rate, revenue influenced per demo. Tier 2 (intent signals): stakeholder engagement depth, return visits, time on pricing or ROI steps. Tier 3 (content metrics): completion rate, drop-off points, A/B test results. Report tier 1 to leadership. Use tier 2 for deal prioritization. Use tier 3 for demo optimization.

3: Segment tracking by deal type and buyer stage

A top-of-funnel marketing demo on a landing page and a mid-funnel personalized demo sent by an AE serve different purposes. Measuring them with the same benchmarks produces misleading data.

Application: Create separate tracking segments. (a) Marketing demos: embedded on website, gated or ungated. (b) Sales demos: sent 1:1 or personalized per account. (c) Post-sale demos: onboarding, training, expansion. Each segment gets its own benchmarks and attribution model. A 15% demo-to-opportunity conversion rate is strong for a sales demo but unrealistic for an ungated landing page embed.

4: Optimize monthly, not quarterly

Demo content decays. Buyer expectations shift. A demo that converted at 25% in January might convert at 12% in April because the product UI changed or a competitor launched a new feature.

Application: Run a monthly review cycle. (1) Check conversion rates by demo type. (2) Identify the highest drop-off step in each demo. (3) A/B test one change per demo (CTA placement, step order, personalization depth). (4) Update demos when the product ships changes that affect the flow. Quarterly reviews are too slow. By the time you spot a problem, you've lost three months of pipeline data.

How to measure demo ROI: step-by-step process

Step 1: Define your demo types and their role in the sales process

What to do: List every demo format your team uses (interactive demos, sandboxes, live demos, recorded walkthroughs, demo centers). Map each to a funnel stage (awareness, evaluation, decision, post-sale).

Why it matters: You can't measure ROI if you don't know what you're measuring. A marketing demo on a landing page has different success criteria than a personalized demo sent after a discovery call. Tracking demo engagement without this context produces numbers that mislead rather than inform.

Output: A simple table mapping demo type to funnel stage to primary success metric to owner (marketing, AE, SE, CS). This takes 20 minutes and clarifies everything downstream.

Step 2: Set up CRM integration and attribution fields

What to do: Connect your demo platform to your CRM (Salesforce, HubSpot, or similar). Create custom fields or activity types that log demo engagement events on contact and opportunity records. At minimum, capture: demo viewed (yes/no), demo completion percentage, number of unique stakeholders who viewed, date of first and last view.

Why it matters: Without this, demo data stays in a silo. AEs can't see demo engagement in their deal view. RevOps can't include demos in pipeline reports. Leadership can't credit demos in forecast reviews.

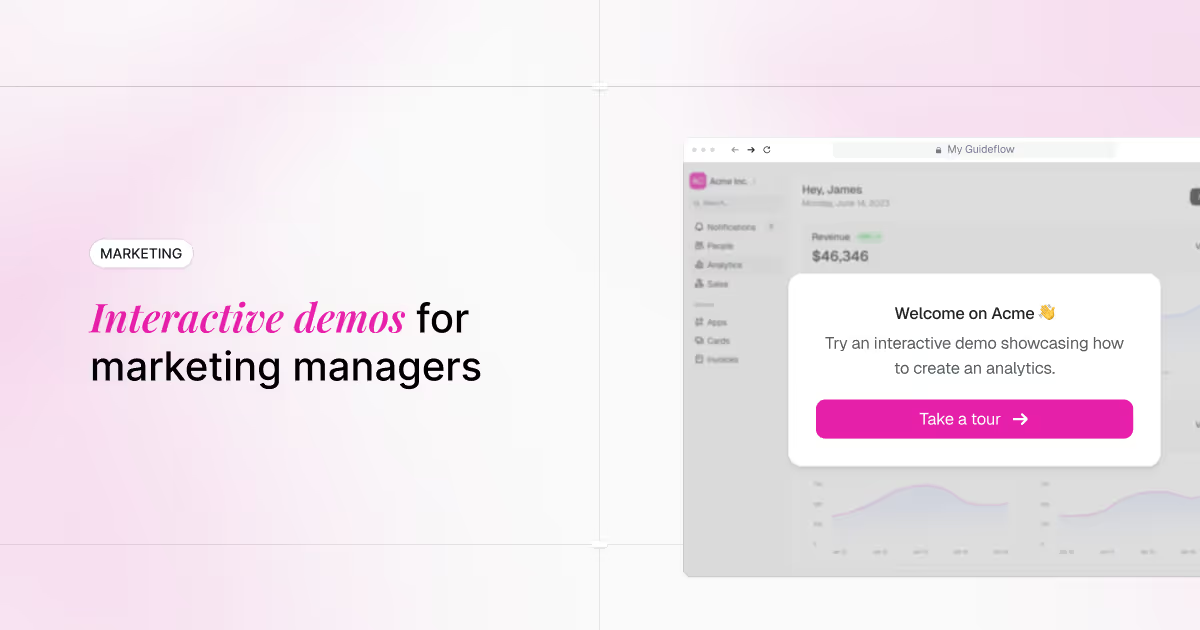

Platforms like Guideflow sync session-level engagement data, including steps viewed, time spent, and completion rate, directly to CRM contact and opportunity records. Every demo interaction appears in the deal timeline without manual logging. If your platform doesn't support native sync, use Zapier or a middleware tool to push events. You can explore all available integrations to see what's supported natively.

Output: CRM fields configured and tested with at least one demo send. Confirm data flows correctly before scaling.

Step 3: Establish baseline metrics and benchmarks

What to do: Before optimizing, capture your current state. Run demos for 30 days with tracking active. Record: play rate, completion rate, demo-to-meeting conversion, demo-to-opportunity conversion, average stakeholders per demo view.

Why it matters: You need a baseline to measure improvement against. "Our completion rate improved by 15%" means nothing without knowing where you started. Aim for a minimum of 50 demo sessions per type for statistical relevance.

Output: A baseline report with current metrics across each demo type, including sample sizes.

Step 4: Build your attribution model

What to do: Decide how you'll credit demos in the pipeline. The demo was the first interaction that created the opportunity. The demo was the last interaction before the deal moved to a new stage.

Why it matters: Attribution model choice determines how demo ROI shows up in reports. First-touch overcredits top-of-funnel demos. Last-touch overcredits late-stage demos. Multi-touch is the most accurate but requires more setup and RevOps support. If you're evaluating tools to support this, our roundup of the best attribution software tools covers the leading platforms.

The honest trade-off: if you're starting from zero, multi-touch attribution is probably too complex for your first iteration. Begin with "demo-influenced" attribution (any opportunity where at least one contact viewed a demo). You can refine to weighted multi-touch once your data is clean.

Output: A documented attribution model with rules for how demo interactions are credited. Share with RevOps and sales leadership so everyone reads the same reports.

Step 5: Create your reporting dashboard

What to do: Build a dashboard (in your CRM, BI tool, or demo platform) that displays: (a) total demos sent and viewed, by type, (b) demo-to-opportunity conversion rate, (c) revenue influenced by demos (total pipeline value of opportunities where a demo was viewed), (d) average deal velocity for demo-influenced vs. non-demo-influenced deals, (e) top-performing demos by conversion rate. Guideflow's built-in analytics features can surface much of this data natively.

Why it matters: If the data isn't visible, it doesn't get used. AEs need to see demo engagement in their deal view. Managers need pipeline-level reporting. Marketing needs channel-level performance.

Output: A live dashboard accessible to AEs, RevOps, and sales leadership. Review weekly in pipeline meetings.

Step 6: Run monthly optimization cycles

What to do: Every month, review: (a) Which demos have the highest and lowest conversion rates? (b) Where are the biggest drop-off points? (c) Are there demos with high engagement but low conversion (indicating a content or CTA problem)? (d) Are there demos with low engagement but high conversion (indicating strong intent, worth promoting more)?

Test one change per cycle. Adjust a CTA, reorder steps, add personalization, or update content.

Why it matters: Demo ROI is not a set-and-forget metric. The teams that improve are the ones that iterate monthly, not quarterly. Demo automation only pays off when you treat the demos as living assets.

Output: A monthly optimization log documenting what was changed, why, and the impact on conversion metrics.

Best practices for demo ROI tracking

Tag every demo with campaign source and deal stage

This connects directly to Mistake 1. Use UTM parameters or demo platform tagging to identify where each demo was sent and at what stage. Without this, your demo attribution data is incomplete from the start.

Every demo should carry three tags at minimum: source (how the prospect found it), stage (where the deal was when the demo was sent), and type (interactive, sandbox, recorded). This makes reporting possible and debugging easy.

Track stakeholder reach, not just individual engagement

This connects to Mistake 3. For enterprise deals, the number of unique stakeholders who viewed the demo is a stronger pipeline signal than any single viewer's completion rate.

Set up alerts when 3+ contacts from the same account view a demo within a week. This buyer intent signal indicates the buying committee is actively evaluating. Prioritize these deals in your follow-up cadence.

Use behavioral signals to prioritize follow-up

Specific buyer intent signals to watch: return visits (viewed the demo more than once), time spent on pricing or ROI steps (indicates evaluation, not browsing), and sharing the demo link internally (multiple unique viewers from the same domain).

Any combination of two or more of these signals is a strong outreach trigger. These aren't vanity metrics. They're deal readiness indicators that should change how quickly and how personally you follow up.

A/B test demo flows, not just CTAs

Most teams test button copy. The higher-impact test is flow structure: does a 5-step demo outperform a 12-step demo? Does starting with the ROI calculator step convert better than starting with the feature walkthrough?

Test one structural change per month. Track the impact on both completion rate and conversion rate. A shorter demo that converts at 20% is more valuable than a longer demo that converts at 8%, regardless of how "complete" the longer version feels.

Align demo content to deal stage

A prospect in discovery needs a different demo than a prospect in technical evaluation. Map your demo library to deal stages and track which stage-specific demos produce the highest demo-to-opportunity conversion rates.

This is where b2b demo strategies start to compound. Instead of one generic demo for all prospects, you build a library of targeted assets. The data tells you which ones work at which stage. Personalizing each demo to the buyer's role and deal stage is what separates high-converting teams from the rest.

Audit your tracking setup monthly

This connects to Mistake 4. CRM integrations break. New demo types get created without proper tagging. Fields get deprecated. A 15-minute monthly check prevents data gaps that take weeks to diagnose.

Your audit checklist:

- Are all demo types tagged and syncing to CRM?

- Are new demos created in the last 30 days properly configured?

- Are any CRM fields returning null or incomplete data?

- Do the numbers in your demo platform match the numbers in your CRM?

Common mistakes in demo ROI tracking

1: Tracking engagement without connecting it to pipeline

What it looks like: A dashboard shows 200 demo views last month. Nobody can tell you how many of those viewers became opportunities. The demo platform reports engagement. The CRM reports pipeline. The two never meet.

What works instead: Sync demo engagement events to CRM contact and opportunity records so every view is tied to a deal stage. This is a CRM integration problem, not a metrics problem.

2: Using completion rate as a standalone success metric

What it looks like: A 70% demo completion rate feels good. But the demos that convert best might have a 45% completion rate because prospects skip directly to the pricing section. They're not browsing. They're evaluating.

What works instead: Pair completion rate with conversion rate. A demo with 50% completion but 30% demo-to-meeting conversion is outperforming a demo with 90% completion and 5% conversion. The demo completion rate only matters in context.

3: Ignoring multi-stakeholder engagement

What it looks like: You track that one person viewed the demo. But in a deal with 6 to 10 stakeholders, the question is how many of them engaged. Single-viewer tracking misses the buying committee entirely.

What works instead: Track unique viewers per opportunity. Deals where 3+ stakeholders view the demo tend to close at higher rates than single-viewer deals. Measure stakeholder reach, not just individual engagement.

4: Setting up attribution once and never revisiting it

What it looks like: CRM fields were configured six months ago. Since then, three new demo types were added, UTM conventions changed, and half the data is missing or mistagged.

What works instead: Run a monthly attribution audit. Check that demo events are flowing to CRM, that new demo types are tagged correctly, and that no data gaps exist. Fifteen minutes a month prevents weeks of cleanup later.

5: Measuring demos in isolation from the rest of the sales process

What it looks like: Demo ROI is calculated as if the demo is the only touchpoint. In reality, the demo sits between a discovery call and a technical deep-dive. It's one piece of a multi-touch process.

What works instead: Use multi-touch attribution. Credit demos as one influence point in the deal, not the sole cause. This gives you a more accurate picture and a more defensible report for leadership.

What to do next

- Open your demo platform. Open your CRM. Check if demo engagement events appear on contact or opportunity records. If they don't, that's your first fix.

- List every demo your team uses, its funnel stage, its success metric, and its owner. This takes 20 minutes and clarifies what you're actually measuring.

- , for example, syncs to Salesforce and HubSpot), configure it for one demo type first. Test with a real demo send. Confirm the data appears in the CRM before rolling out to the full library.

- Multi-touch is ideal but requires RevOps support. If you're starting from zero, begin with "demo-influenced" (any opportunity where at least one contact viewed a demo). You can refine later.

- Block 30 minutes on the calendar, four weeks from today. Review the metrics from the benchmark table. Identify one demo to improve. Make one change. Log it.

Measurement and evaluation

Core metrics table

Interpreting the data

If demo-to-meeting conversion is below 10%, the problem is usually targeting (wrong audience) or CTA design (unclear next step), not demo content. Check who's receiving the demos and whether the call-to-action gives them a specific, low-friction next step.

If stakeholder depth is consistently 1, the demo isn't being shared internally. Add prompts like "Share this with your team" or personalize for multiple roles within the account. Guideflow's sharing capabilities make it easy to distribute demos via link, embed, or email so more stakeholders engage. In enterprise deals, single-viewer engagement is a weak signal.

If revenue influenced by demos is high but win rate is flat, demos are reaching pipeline but not influencing outcomes. Check whether demos are reaching decision-makers, not just champions. The CFO viewing the pricing section matters more than the SDR completing the full walkthrough. Pairing demo data with revenue intelligence platforms can help you pinpoint where deals stall.

Conclusion

Demo ROI tracking is not about collecting more data. It's about connecting the data you already have (demo engagement) to the data that matters (pipeline and revenue). The gap between the two is a CRM integration problem, not a metrics problem.

The AEs and teams that close this gap gain a concrete advantage: they can prove which demos influence deals, prioritize follow-up based on intent signals, and optimize their demo content monthly instead of guessing.